Introduction

In recent years, the advance of AI and its impact on society have been widely covered in various media. Meanwhile, the current AI is not yet like humans in terms of versatility, save for the issue of consciousness. While today’s AIs are narrow, in the sense that they are created for specific types of tasks, humans have general intelligence capable of performing various tasks not anticipated at the time of their birth. We emphasize general intelligence, for, besides that it would be a good research objective, AGI (artificial general intelligence) will substitute almost all work that humans are currently performing and its impact on society will be substantial. Some research institutes (e.g., Google DeepMind) have begun to work on the realization of AGI, so that we can see its importance in that regard too.

WBAI promotes the research and development of AGI based on the WBA approach to create a human-like artificial general intelligence (AGI) by learning from the architecture of the entire brain. This article explains the current prospect of this approach.

Human-like AGI

The WBA approach sets forth to learn from the architecture of the entire brain as a means to create human-like AGI. Besides the generality mentioned above, a point here is to create “human-like” AGI. Human beings are not determined about what they can do at the time of the birth, but have “general intelligence” which enables them to perform new tasks by training, instruction, and ingenuity. One of the reasons for aiming for human-like AGI is that one can learn from human beings as living general intelligence. Another reason is that human-like AGI would be easier to understand for human beings; it may be unfavorable for human beings or society to make AGI that we cannot understand.

When you are actually to make something human-like, the definition of “human” can be a problem. While there are many definitions, let us here regard human beings as “animals that use language.”

Getting “animalhood” is important for AGI, for animals need to recognize and act on various things properly in the physical world for survival and AGI also need to do so in order to carrying out tasks in the world. While animals’ flexible cognitive abilities pertaining to general intelligence have not been reproduced in AI, it would be effective to draw on animals and their brains to realize the abilities in AI.

Let us now turn to the use of human language. Since human language is general in that it can express any procedure or thought, human-like AGI would be animal-like AI that uses human language. Here note that humans live in the real world like animals and human language has meanings in relation to things in the real world. It means that human-like AGI should be AI that uses human language in relation to things in the real world. While conventional computers have a general capability in the sense that they can carry out any procedure specified in computer languages, it does not mean they can use human language in relation to things in the real world.

Brain Architecture

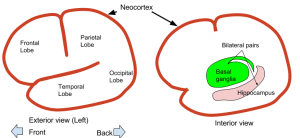

In order to talk about learning from the architecture of the whole brain, let us look into the brain architecture. The human brain consists of the cerebrum, the cerebellum, and the midbrain. Here we focus on the cerebrum, which is considered most relevant to intelligence. The cerebrum consists of the neocortex on the surface, interior parts, and nerve fibers connecting parts. Although the function of the neocortex differs from area to area, its structure is known to be uniform overall. The hippocampus and the basal ganglia are major interior parts. The functions of the parts (Fig. 1) are roughly as follows:

- Neocortex: learning spatiotemporal patterns

- Hippocampus: transferring event memory to the neocortex

- Basal ganglia: learning behavior based on rewards

A lot about the structure and functions are known about these parts and attempts have been made to model and simulate them based on the knowledge.

As a point for the WBA approach, there is a hope that modeling a few types of brain parts might lead us to creating human-like AI. An issue here is the architecture design to combine the parts. The wiring of the brain architecture is specified by the connection of nerve fibers called connectome, about which much has been known through many years of research.

Fig. 1 Cerebrum

The neocortex occupying a large part of the cerebrum has various functions depending on the area. The differences are caused by how areas are connected to others. The areas of the neocortex can roughly be divided as follows:

- Perceptual Areas

Including the visual cortex, the auditory cortex, and the somatosensory cortex;

They form hierarchies from areas handling simple patterns to areas handling more complex patterns.

Located in the occipital lobe (visual cortex), the temporal lobe (auditory cortex), and the parietal lobe (somatosensory area). - Execution Areas

form a hierarchy from the primary motor area that sends signals directly to the muscles to the areas that deal with more complex patterns.

Located in the frontal lobe (motor and prefrontal cortices). - Association Areas

integrate various sensory and motor signals and represent where and in what form patterns exist.

Located mostly in the posterior upper part of the cerebrum (parietal lobe). Again, the hierarchy is present according to the complexity of patterns.

Relationship with things

While human-like AGI would be AI that uses human language in relation to things in the world (as stated above), the brain processes information in relation to things in the world. The neocortex can perceive things in the outside world, recognize the arrangement of perceived things by association, and plan and execute actions in the world.

Learning and memory are important for acting properly. In order to carry out actions properly, (statistical) modeling of spatiotemporal patterns by the neocortex is indispensable. To decide which actions are good for an individual, learning based on rewards (reinforcement learning) in the basal ganglia is indispensable. The hippocampus “etches” the memory of experiences in the neocortex so that it can be recalled and used later.

Machine learning and human intelligence

The WBA approach uses machine learning techniques as well as drawing on the brain. “Deep learning” with artificial neural networks is a ‘hot’ machine learning technique at the moment. In deep learning, information is processed by layers of neural circuits. By feeding the output back to the input, neural circuits can process not only spatial patterns but also temporal patterns (recurrent neural networks or RNN). Since expressions of human language are temporal patterns, the processing of temporal patterns is essential for human-like AGI. RNNs are known to be able to handle grammars like those used by human language. RNN models converting spatiotemporal patterns into other spatiotemporal patterns have also been proposed. With such conversion, patterns of language expressions can be translated into “thought patterns” inside AI (i.e., language understanding) and “pattern of thought” into patterns of language expressions (i.e., utterance).

Human-like AI should learn not only correspondence between language and thought but also how to use language by, for example, reinforcement learning and case-based learning. It should also learn what kind of expressions should be used in which occasions by interacting with humans. Besides the abilities mentioned, AGI must be able to plan (and planning AIs exist).

Roadmap

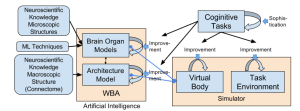

With the WBA approach, we are thinking of the following research and development processes (Fig. 2).

Fig. 2 WBA research and development process

WBA as AI consists of models of brain organs and the connection between them. The modeling of a brain organ is carried out with reference to the microscopic (fine) structure of the organ and machine learning techniques. The modeling of connections is based on the information of the macroscopic connection in the brain (connectome). WBA is incorporated into a virtual body (robot) in a simulator to be tested with various tasks. Beginning with a simple task and a simple model at the beginning, the more sophisticated tasks are given, the more sophisticated models are to be developed.

This development process is progressively advanced from simple functions that can be realized with the current state of the art. It is important to make a working system integrating models of the neocortex, the basal ganglia, and the hippocampus. It would be possible to realize in one to two years such models to solve tasks rodents can perform. Meanwhile, humans use language. Observing human children will help to obtain clues for creating AI that can use language. Learning social relations might be a key for AI to learn language and thus to be AGI, as children learn language by getting aware of what other people refer to.

We hope to see human-like AGI developed within decades through this development process. The speed of the advance would depend on the number of people who work intensively on it. So we are going to support more people to learn expertises and to collaborate in research and development in this activity.

Japanese

Japanese